● The Gradient

📅 08/03/2024 à 18:55

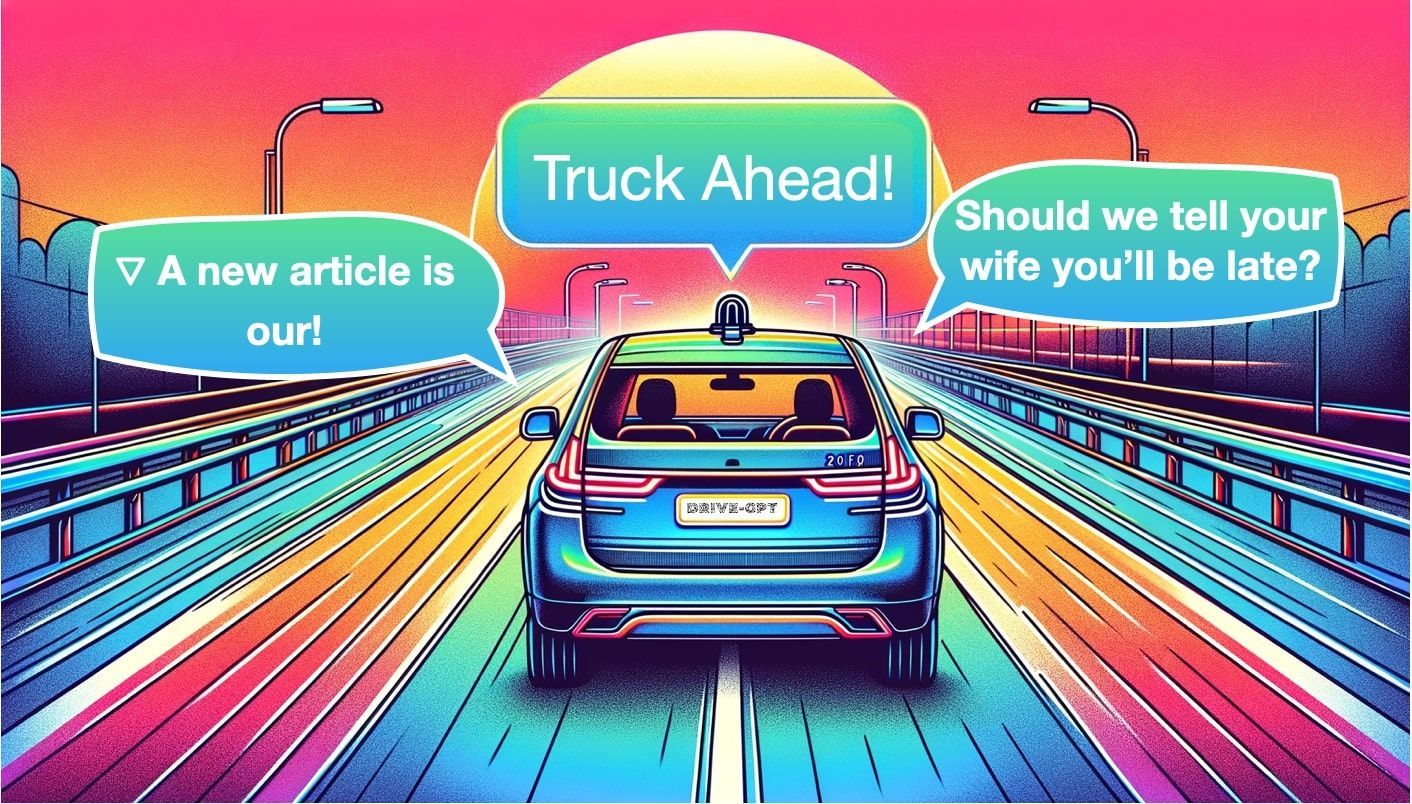

Car-GPT: Could LLMs finally make self-driving cars happen?

Data Science

👤 Jérémy Cohen

In 1928, London was in the middle of a terrible health crisis, devastated by bacterial diseases like pneumonia, tuberculosis, and meningitis. Confined in sterile laboratories, scientists and doctors were stuck in a relentless cycle of trial and error, using traditional medical approaches to solve complex problems.This is when, in September 1928, an accidental event changed the course of the world. A Scottish doctor named Alexander Fleming forgot to close a petri dish (the transparent circular box you used in science class), which got contaminated by mold. This is when Fleming noticed something peculiar: all bacteria close to the moisture were dead, while the others survived."What was that moisture made of?" wondered M. Flemming. This was when he discovered that Penicillin, the main component of the mold, was a powerful bacterial killer. This led to the groundbreaking discovery of penicillin, leading to the antibiotics we use today. In a world where doctors were relying on existing well-studied approaches, Penicillin was the unexpected answer.Self-driving cars may be following a similar event. Back in the 2010s, most of them were built using what we call a « modular » approach. The software « autonomous » part is split into several modules, such as Perception (the task of seeing the world), or Localization (the task of accurately localize yourself in the world), or Planning (the task of creating a trajectory for the car to follow, and implementing the « brain » of the car). Finally, all these go to the last module: Control, that generates commands such as « steer 20° right », etc… So this was the well-known approach. But a decade later, companies started to take another discipline very seriously: End-To-End learning. The core idea is to replace every module with a single neural network predicting steering and acceleration, but as you can imagine, this introduces a black box problem.The 4 Pillars of Self-Driving Cars are Perception, Localization, Planning, and Control. Could a Large Language Model replicate them? (source)These approaches are known, but don’t solve the self-driving problem yet. So, we could be wondering: "What if LLMs (Large Language Models), currently revolutionizing the world, were the unexpected answer to autonomous driving?"This is what we're going to see in this article, beginning with a simple explanation of what LLMs are and then diving into how they could benefit autonomous driving.Preamble: LLMs-what?Before you read this article, you must know something: I'm not an LLM pro, at all. This means, I know too well the struggle to learn it. I understand what it's like to google "learn LLM"; then see 3 sponsored posts asking you to download e-books (in which nothing concrete appears)... then see 20 ultimate roadmaps and GitHub repos, where step 1/54 is to view a 2-hour long video (and no one knows what step 54 is because it's so looooooooong).So, instead of putting you through this pain myself, let's just break down what LLMs are in 3 key ideas:TokenizationTransformersProcessing LanguageTokenizationIn ChatGPT, you input a piece of text, and it returns text, right? Well, what's actually happening is that your text is first converted into tokens.Example of tokenization of a sentence, each word becomes a "token"But what's a token? You might ask. Well, a token can correspond to a word, a character, or anything we want. Think about it -- if you are to send a sentence to a neural network, you didn't plan on sending actual words, did you?The input of a neural network is always a number, so you need to convert your text into numbers; this is tokenization.What tokenization actually is: A conversion from words to numbersDepending on the model (ChatGPT, LLAMA, etc...), a token can mean different things: a word, a subword, or even a character. We could take the English vocabulary and define these as words or take parts of words (subwords) and handle even more complex inputs. For example, the word « a » could be token 1, and the word « abracadabra » would be token 121.TransformersNow that we understand how to convert a sentence into a series of numbers, we can send that series into our neural network! At a high level, we have the following structure:A Transformer is an Encoder-Decoder Architecture that takes a sequence of tokens as input and outputs a another series of tokensIf you start looking around, you will see that some models are based on an encoder-decoder architecture, some others are purely encoder-based, and others, like GPT, are purely decoder-based.Whatever the case, they all share the core Transformer blocks: multi-head attention, layer normalization, addition and concatenation, blocks, cross-attention, etc...This is just a series of attention blocks getting you to the output. So how does this word prediction work?The output/ Next-Word PredictionThe Encoder learns features and understands context... But what does the decoder do? In the case of object detection, the decoder is predicting bou

🔗 Lire l'article original

👁️ 4 lectures